|

11/29/2023 0 Comments Data astronomer apache airflow insight

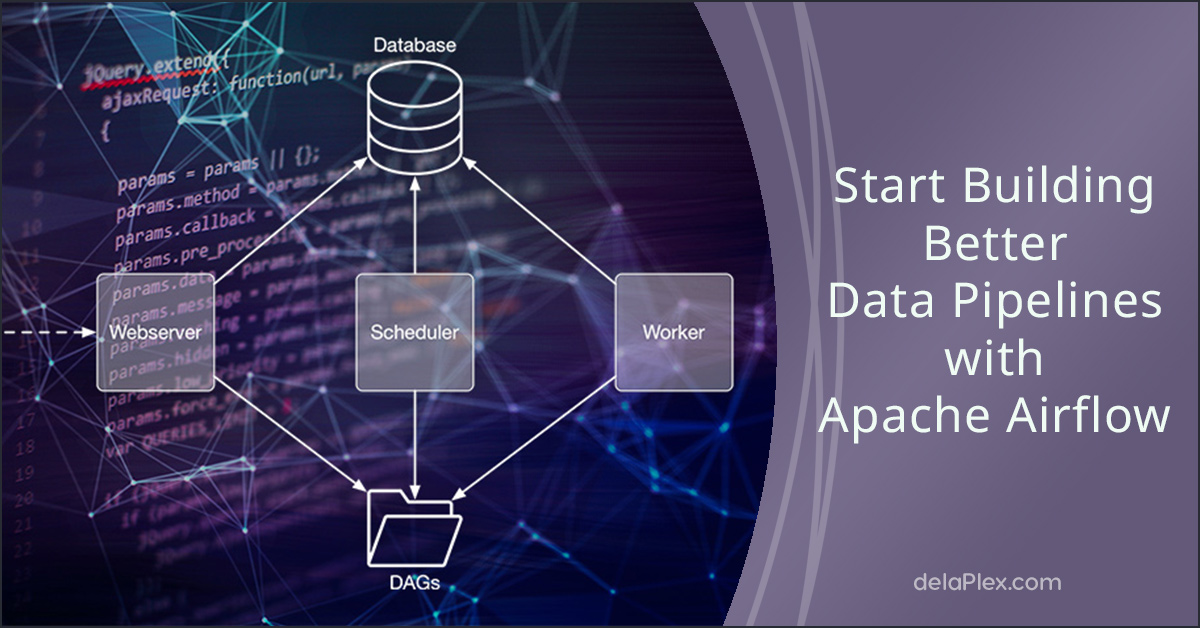

In 2015, Airbnb was growing rapidly and struggling to manage the vast quantities of internal data it generated every day. See the Python Documentation.Īirflow started as an open source project at Airbnb. To get the most out of this guide, you should have an understanding of: You'll learn:įor a hands-on introduction to Airflow using the Astro CLI, see Get started with Apache Airflow. This guide offers an introduction to Apache Airflow and its core concepts. Airflow allows data practitioners to define their data pipelines as Python code in a highly extensible and infinitely scalable way. It has over 9 million downloads per month and an active OSS community. Morgan, K5 Global, Sutter Hill Ventures, Venrock and Sierra Ventures.Apache Airflow is an open source tool for programmatically authoring, scheduling, and monitoring data pipelines. The latest round was led by Insight Ventures with Meritech Capital, Salesforce Ventures, J.P. Datakin’s website promises: “With lineage, organizations can observe and contextualize disparate pipelines, stitching everything together into a single navigable map.”Īstronomer has now gained $283 million in total funding. There is a need to discover, observe and verify the lineage – the sequence – of the data involved and the Datakin acquisition enables Astronomer to have an end-to-end method to achieve it, using lineage metadata. It’s almost the industry-standard way of doing DataOps.Īstronomer effectively took over its development and maintains it as an open source product while also shipping its own Astro product – a data engineering orchestration platform that enables data engineers, data scientists, and data analysts to build, run, and observe pipelines-as-code.

There are more than eight million Airflow downloads a month and hundreds of thousands of data engineering teams across a whole swaths of businesses use it – such as Credit Suisse, Condé Nast, Electronic Arts, and Rappi. Airbnb created Airflow, using Python (procedure-as-code), to automate its own data pipeline engineering, and donated it to the Apache Foundation. DAGs enable pipelines to be planned and instantiated. If the D step is left out of the sequence than the overall procedure will fail. The diagram shows an example DAG, with B or C procedures dependent on the type of data in A and the desired end-point analytic run. … Airflow’s comprehensive orchestration capabilities and flexible, Python-based pipelines-as-code model rapidly made it the most popular open-source orchestrator available.”

In effect, this is an Extract, Transform and Load (ETL) process with multiple data pipelines which needed creating, developing, maintaining and scheduling.Īstronomer CEO Joe Otto blogged: “Astronomer delivers a modern data orchestration platform, powered by Apache Airflow, that empowers data teams to build, run, and observe data pipelines. The data came from various sources in various formats and needed collecting, filtering, assembling, cleaning, and organizing into sets that could be sent for analysis according to schedules.

These fed data gathered from the operation of its global host accommodation booking business to analytics routines, so Airbnb could track its business performance. The software is based on Apache Airflow – code originally developed by Airbnb to automate what it called its data engineering pipelines. It has also bought data lineage company Datakin. An outfit called Astronomer has landed $213 million in funding to continue developing its data operations engineering software so users get cleaner data assembled and organised faster for analytic runs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed